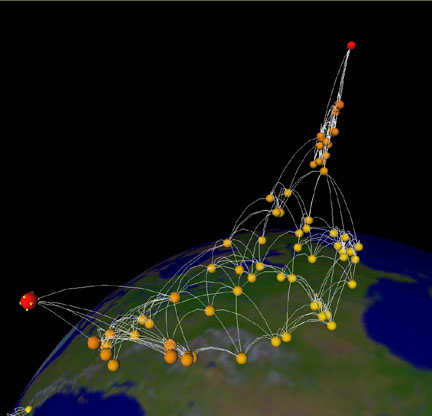

For the segmentation step we trained models with the TernausNetV1 architecture (a U-Net with a VGG11 encoder, not using pre-trained weights in our case for full details, see their paper and the source code) using Keras. We chose a semantic segmentation approach followed by object boundary tracing to identify building outlines. The source code and instructions to get the models up and running is available in a repo on CosmiQ Works’ GitHub. To highlight these and help challenge competitors get started, we built a set of baseline building footprint detection models and then evaluated their building detection performance. Mapping building footprints from imagery acquired at different nadir angles poses unique challenges, as detailed more below. For competition specifics and details about the underlying datasets, check out the Topcoder challenge website and the dataset announcement blog post. The SpaceNet 4: Off-nadir building detection challenge has begun, and participants are vying for $50,000 of prize money by competing to see who can most accurately identify buildings in 27 different WorldView 2 satellite image collects taken at different angles over Atlanta. For the first part of the series, click here. This post is part 2 of a series about the SpaceNet 4: Off-Nadir Dataset and Building Detection Challenge. Note: SpaceNet is a collaborative effort between CosmiQ Works, Digital Globe and Radiant Solutions hosted on Amazon Web Services as a public dataset. of Winter Applications of Computer Vision, 2019.Baseline building footprint predictions overlaid on an image from SpaceNet 4: Off-Nadir Building detection. Brown, "Semantic Stereo for Incidental Satellite Images," Proc. Brown, "2019 IEEE GRSS Data Fusion Contest: Large-Scale Semantic 3D Reconstruction ", IEEE Geoscience and Remote Sensing Magazine, 2019. of Computer Vision and Pattern Recognition, 2020.ī. Brown, "Learning Geocentric Object Pose in Oblique Monocular Images," Proc. of Computer Vision and Pattern Recognition EarthVision Workshop, 2021. Brown, "Single View Geocentric Pose in the Wild," Proc. If you use the extended US3D dataset, please cite the following papers: Test sets are available for the DFC19 challenge problems on CodaLab leaderboards. We plan to make test sets for all cities available for the geocentric pose problem in the near future. Test data is not provided for the DFC19 cities or for Atlanta. Validation data from DFC19 is extended here to include additional data for each tile. Cities LiDAR and vector data were made publicly available by the Homeland Security Infrastructure Program. All other commercial satellite images were provided courtesy of DigitalGlobe. New data for Atlanta was derived from public satellite images released for SpaceNet 4, licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. Data for DFC19 was derived from public satellite images released for IARPA CORED. For details, please see our CVPR paper.Īll data used to produce the extended US3D dataset is publicly sourced. We added new reference data to this extended US3D dataset to enable training and validation of models to predict geocentric pose, defined as an object's height above ground and orientation with respect to gravity. These include the original DFC19 training and validation point clouds with full UTM coordinates to enable experiments requiring geolocation. For point cloud data, individual ZIP files are provided for each city from DFC19. Extra training data is also provided in separate TAR files. For image data, individual TAR files for training and validation are provided for each city. For more information about the contest, see the following links:ĭetailed information about the data content, organization, and file formats is provided in the README files.

Winning solutions from the contest, including model weights, are also now archived here. UPDATE: New data associated with our CVPRW'21 paper and the Overhead Geopose Challenge is now also archived here. We also add to the DFC19 data from Jacksonville, Florida and Omaha, Nebraska with new geographic tiles from Atlanta, Georgia. We provide additional geographic tiles to supplement the DFC19 training data and also new data for each tile to enable training and validation of models to predict geocentric pose, defined as an object's height above ground and orientation with respect to gravity. This dataset extends the Urban Semantic 3D (US3D) dataset developed and first released for the 2019 IEEE GRSS Data Fusion Contest (DFC19).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed