It also provides you a Find option to search for specific text in the opened file. These features are page navigation features, zoom in/ out, etc. You can simply open a CHM file in it and then read it using available features. However, it only shows text from the imported file. This software cannot open any other file apart from CHM as it is entirely dedicated to reading CHM files. Read: Best PDF and eBook Reader Apps for Windows.Īs the name suggests, Free CHM Reader is a dedicated free software that lets you read CHM files. A nice text-to-speech feature to listen to the text is also available in it.Īll in all, it is a good free book reader that also lets you view CHM files. It also offers a feature to enable night reading mode as per your requirements. Some nice reading features that you get in it are page navigation options, view bookmarks, search text, rotate the page, modify the font style, adjust line spacing, change text alignment, tweak background colors, change languages, etc. You can also read files like FB2, TXT, RTF, DOC, TCR, HTML, EPUB, CHM, PDB, and MOBI in this freeware. It is basically a free eBook reader that also supports CHM files to open and view. 3] Cool ReaderĬool Reader is a free CHM reader software for Windows 11. See: Best Free Comic Book Readers for Windows. You can download the preferred version from. It comes in both portable and installer versions. For that, you can use its Save as feature that enables you to save CHM files in text format. You can also convert CHM to TXT using it. It also lets you open multiple CHM files in different tabs at a time. Some of these features include zoom in/ out, rotate, enable double facing or single page view, fit page width, enable presentation or fullscreen view mode, page navigation options, and more. It offers some really good features that help in enhancing your reading experience. You can use it to read files in formats like DjVu, CBZ, CBR, XPS, EPUB, MOBI, FB2, PDB, etc. Sumatra PDF is a free eBook reader that also lets you view CHM files. Read: Read DjVu books on PC using free DjVu Reader software or websites. Plus, you can find some handy tools in it such as an eBook downloader, eBook metadata editor, etc. It lets you convert CHM to formats like EPUB, MOBI, PDF, DOCX, RTF, TXT, and more formats.Ĭalibre is one of the best eBook readers using which you can read CHM files as well as various other eBooks. The file will be opened in its E-book viewer window where you can read it.Īpart from just viewing the CHM file, you can even convert it to other formats using its inbuilt eBook converter tool. Next, from the main interface, double-click on the CHM file you want to open. Now, press the Add books button to import your source CHM file to it. Just download and install calibre on your system and then launch the application. An online dictionary feature is also provided in it that lets you look for the meaning of a word or phrase on the web. Additionally, you can also change font style, font color, text layout, background color, text color, etc., to view the CHM file.

Features like bookmarks, zoom in/ out, page-flipping patterns, and more are available in it. You can find all the necessary reading tools in this software. It offers a built-in eBook reader that allows you to read CHM files. It is basically a free open-source eBook management software that also lets you open and view CHM files. 1] CalibreĬalibre is a free CHM file reader software for Windows and other operating systems. Let us discuss the above CHM readers in detail now. Here are the best free CHM file reader software and online tools: Best free CHM file reader software and online tools So, check out the article to know the full list. Apart from that, there are a few online tools that you can use to view CHM files online in a web browser. In that case, this guide will help you find multiple freeware that let you read CHM documents on your PC. So, if you want to read a CHM file, you need a special application that supports the file format. Now, there are not many free software that allow you to view files with the CHM extension. It is basically used for help documentation and may consist of text, images, and hyperlinks. CHM which stands for Compiled HTML file format is a document format that contains HTML documents, images, Java scripts, and more in compressed form. These free software and online services allow you to open and view CHM documents. Here is a list of the best free CHM file reader software and online tools.

0 Comments

Please take a quick gander at the contribution guidelines first. I did not continue and existing one.We have no monthly cost, but we have employees working hard to maintain the Awesome Go, with money raised we can repay the effort of each person involved! All billing and distribution will be open to the entire community.Ī curated list of awesome Go frameworks, libraries and software. Its worth noting, I started the repo from scratch again after I modified the pack size. I plan on using this moving forwards at least for this Repo and the few others I have that are mainly immutable. But moving it between a local machine and a NAS was where it really helped because those transfers were not parallel. Moving the Repo: Using Rclone to download the whole repo was massively faster because of the Pack Size too.(would be good to have the packer doing the next pack while the upload is going, or upload as its packing like Duplicacy) Means the overall transfer rate takes a hit, but its still better. Waiting Times: It seems like packing has to finish before an upload can start, so I end up with a longer idle time between each transfer while the packer works.Check Times: Seem a little quicker, but Pruning still took a few hours surprisingly.

Transfer Rates: Much quicker sending to Google Drive and Wasabi.File Count: As expected, far fewer files.I haven’t tried to restore using the official binary yet to see what happens, but it didn’t look like a restore would complicate anything, only the commands that write. I did note that using ‘mount’ crashed out every time but it may not be anything to do with the Pack Size, I haven’t tried mount on the official binary.

I’ve stored about 4.7TB with it and haven’t had any issues so far doing multiple backup, check, prune or restore commands. I modified the Pack Size to be about 250MB (most of my files are immutable and are between 100MB-2GB in this repo) and have been using it for the last month. On the other hand, if you never delete anything, you might not really need to delete any snapshots, as you wouldn’t recover very much space, so the prune issue might be a moot point. You’d probably have to do some testing to determine the best pack size that both meets your goal of having fewer files, but doesn’t make prune operations have to download most of the data in the repository. If you have just a few large monolithic packs, prune is going to be a very painful process as it will likely have to rewrite most of the packs in the repository when you do your weekly/monthly prune. When you forget some snapshots and prune, restic tries to discard all unused objects, which requires the packs containing these objects to be rewritten and consolidated. The bigger issue with large pack sizes is that even tree objects are stored in packs. Although I dunno what this would mean for the handful of smaller project/meta files that do change occasionally.įor me at least, its only the Repo that has backups from my Laptops that it makes sense having smaller chunks, because files on there change often. I’m not sure if Google My Drive has the same limitation, but figured it would be worth getting ahead of the growth issue in general.įorcing the file sizes to 100-250MB a piece seemed like it would probably help a lot for my situation. Google’s Team Drives have a limit of 400,000 files per drive, and all my Repos already have > 1m files. Having them split up into 1-8MB pieces makes using Cloud storage quite slow and restrictive… I’m currently using Wasabi, but was hoping to migrate to using Google Drive. They do however get reorganized and renamed.

These files “never” (maybe in some distant future) get deleted, and only more get added. The main reason was because I have some large Repos that are made up of predominantly very large ProRes video files.

I’d come across a post that talked about it and it seemed like it might have been a hidden flag or something, but was probably just Forked code.

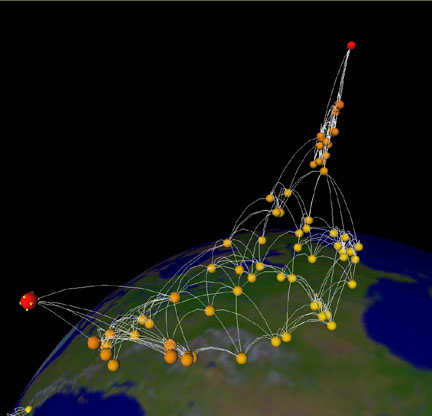

For the segmentation step we trained models with the TernausNetV1 architecture (a U-Net with a VGG11 encoder, not using pre-trained weights in our case for full details, see their paper and the source code) using Keras. We chose a semantic segmentation approach followed by object boundary tracing to identify building outlines. The source code and instructions to get the models up and running is available in a repo on CosmiQ Works’ GitHub. To highlight these and help challenge competitors get started, we built a set of baseline building footprint detection models and then evaluated their building detection performance. Mapping building footprints from imagery acquired at different nadir angles poses unique challenges, as detailed more below. For competition specifics and details about the underlying datasets, check out the Topcoder challenge website and the dataset announcement blog post. The SpaceNet 4: Off-nadir building detection challenge has begun, and participants are vying for $50,000 of prize money by competing to see who can most accurately identify buildings in 27 different WorldView 2 satellite image collects taken at different angles over Atlanta. For the first part of the series, click here. This post is part 2 of a series about the SpaceNet 4: Off-Nadir Dataset and Building Detection Challenge. Note: SpaceNet is a collaborative effort between CosmiQ Works, Digital Globe and Radiant Solutions hosted on Amazon Web Services as a public dataset. of Winter Applications of Computer Vision, 2019.Baseline building footprint predictions overlaid on an image from SpaceNet 4: Off-Nadir Building detection. Brown, "Semantic Stereo for Incidental Satellite Images," Proc. Brown, "2019 IEEE GRSS Data Fusion Contest: Large-Scale Semantic 3D Reconstruction ", IEEE Geoscience and Remote Sensing Magazine, 2019. of Computer Vision and Pattern Recognition, 2020.ī. Brown, "Learning Geocentric Object Pose in Oblique Monocular Images," Proc. of Computer Vision and Pattern Recognition EarthVision Workshop, 2021. Brown, "Single View Geocentric Pose in the Wild," Proc. If you use the extended US3D dataset, please cite the following papers: Test sets are available for the DFC19 challenge problems on CodaLab leaderboards. We plan to make test sets for all cities available for the geocentric pose problem in the near future. Test data is not provided for the DFC19 cities or for Atlanta. Validation data from DFC19 is extended here to include additional data for each tile. Cities LiDAR and vector data were made publicly available by the Homeland Security Infrastructure Program. All other commercial satellite images were provided courtesy of DigitalGlobe. New data for Atlanta was derived from public satellite images released for SpaceNet 4, licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. Data for DFC19 was derived from public satellite images released for IARPA CORED. For details, please see our CVPR paper.Īll data used to produce the extended US3D dataset is publicly sourced. We added new reference data to this extended US3D dataset to enable training and validation of models to predict geocentric pose, defined as an object's height above ground and orientation with respect to gravity. These include the original DFC19 training and validation point clouds with full UTM coordinates to enable experiments requiring geolocation. For point cloud data, individual ZIP files are provided for each city from DFC19. Extra training data is also provided in separate TAR files. For image data, individual TAR files for training and validation are provided for each city. For more information about the contest, see the following links:ĭetailed information about the data content, organization, and file formats is provided in the README files.

Winning solutions from the contest, including model weights, are also now archived here. UPDATE: New data associated with our CVPRW'21 paper and the Overhead Geopose Challenge is now also archived here. We also add to the DFC19 data from Jacksonville, Florida and Omaha, Nebraska with new geographic tiles from Atlanta, Georgia. We provide additional geographic tiles to supplement the DFC19 training data and also new data for each tile to enable training and validation of models to predict geocentric pose, defined as an object's height above ground and orientation with respect to gravity. This dataset extends the Urban Semantic 3D (US3D) dataset developed and first released for the 2019 IEEE GRSS Data Fusion Contest (DFC19).

When speaking of lzma which is what these compressors have in common (expect gzip) it should be noted that it originated in 7-zip which is a great free open source cross-platform archiver by Igor Pavlov. Switching from gzip to xz (or another lzma based compressor) seems like a no-brainer though, it compresses slower than gzip but that doesn't affect the end users, the compression is much better and the decompression speed is only slightly slower. There is a discussion of perhaps using it (lrzip) for package compression on Arch Linux (which currently uses xz), but it's just a suggestion at this point and warrants further investigation. Then there's lrzip (from Con Kolivas of brain-fuck scheduler fame) which combines a long range compressor with lzma. pdf: tar -xf -wildcards '*.Another (maybe better) option could be lzip but it was denied in debian, see had not heard of lzip until now, however it is yet another compressor based upon lzma, just like xz, I don't know if there is any benefit with lzip compared to xz.

You can use –wildcards option allows you to extract specific file format from a tar.xz file.įor example, to extract only the files whose names end in. Similarly, you can extract specific directories from the tar.xz file with the following command: tar -xf dir1 dir2 Lzip vs xz Xz has a complex format, partially specialized in the compression of executables and designed to be extended by proprietary formats. You need to mention the separated list of file names to be extracted after the archive name to extract specific files from tar.xz file: tar -xf filename1 filename2 Its lowest compression is as fast as gzip with better compression and its highest compression level is better than bzip2. It supports to variants of the LZMA algorithm, one really fast and one normal used by all compression levels except 0. It’s easy to extract specific files from the archive. lzip is a lossless data compression program which can be used with tar for compressing archives. xz and 7zip are known to have a better compression algorithm than gzip, but use more memory and time to compress/decompress. How To Extract Specific Files From a tar.xz File Tools to compress/decompress xz and gzip files are also available on Windows systems, but are more commonly seen and used on UNIX systems. Run the following command to extract files into your desired directory as tar command extract files in the current directory by default. tar -xvf Įxtract or Unzip files into the specific directory: It works using the LZMA algorithms, as also used in 7z, so the results should be rather. The -T flag specifies the number of threads (e.g., -T 4 uses four threads). Run the following command to display the list of the files being extracted on the terminal with -v option. Xz is another piece of software that aims to replace gzip by offering similar options and syntax. The compression utility xz is, itself, threaded. Run the following command to extract or unzip tar.xz file in Linux: tar -xf

xz file using the standard tar command: tar -xf file. Today's zip have support for LZMA, so your ratio should be close to xz one. Unrar Windows, supports RAR, ZIP, LZIP, GZIP, TAR files and 7zip files. Xz is obvious choice here, but use normal zip if you're going compatibility way - it's so widely adopted standard that you have nothing to worry about. Extracting tar.xz files in Linux with tar command I like using xz with par2 for data redundancy in case of file damage. Archiving only Tip: Both GNU and BSD tar automatically do decompression delegation for bzip2, compress, gzip, lzip, lzma, lzop, zstd, and xz compressed archives. The tar command or tool is pre-installed by default on all Linux distributions. Of course there are also tools that do both, which tend to additionally offer encryption, error detection and recovery. However, BZip2, LZip and XZ have no metadata (GZip has a little) so using them without something like a Tar file makes. Xz uses the LZMA algorithm to compress files in Linux. We will use tar command to unzip files like gzip, bzip2, lzip, lzma, lzop, and xz. So, In this tutorial post, we are going to show you the method for extracting tar.xz files in Linux based operating systems. lzip is capable of creating archives with independently decompressible data sections called a 'multimember archive' (as well as split output for the creation of multivolume archives).2 For example, if the underlying file is a tar archive, this can allow extracting any undamaged files, even if other parts of the archive are damaged. If compression time is more important than compression ratio, Gzip beats XZ.

Like xz, it uses LZMA compression, but, instead of creating. It’s really hectic when you don’t know this simple command but Linux is all about learning and doing things. Compression is useless without the ability to decompress it. In 2008, Antonio Diaz released a similar utility called lzip. One of the frustrations for the beginner Linux users in Linux is to extract tar.xz files in Linux based operating systems. In this article will test three of the most common compression tools to compress a 100MB ASCII file using all available compression levels VS compression speed. Tutorial To Extract tar.xz File In Linux Operating System |

RSS Feed

RSS Feed